Large Language Models - UPSC Science And Technology

What is Large Language Models in UPSC Science And Technology?

Large Language Models is a key topic under Science And Technology for UPSC Civil Services Examination. Key points include: LLMs are AI models trained on vast datasets to understand and generate human language.. They solve common language problems like text classification, Q&A, and text generation.. Architectural types include Autoregressive (e.g., GPT-3), Transformer-based (e.g., Gemini), and Encoder-decoder models.. Understanding this topic is essential for both UPSC Prelims and Mains preparation.

Why is Large Language Models important for UPSC exam?

Large Language Models is a Medium-level topic in UPSC Science And Technology. It is tested in both Prelims (factual MCQs) and Mains (analytical answer writing). Previous year UPSC questions have frequently covered aspects of Large Language Models, making it essential for comprehensive IAS preparation.

How to prepare Large Language Models for UPSC?

To prepare Large Language Models for UPSC: (1) Study the comprehensive notes covering all key concepts on Vaidra. (2) Practice previous year questions on this topic. (3) Connect it with current affairs using daily updates. (4) Revise using key takeaways and mind maps available for Science And Technology. (5) Write practice answers linking Large Language Models to related GS Paper topics.

Key takeaways of Large Language Models for UPSC

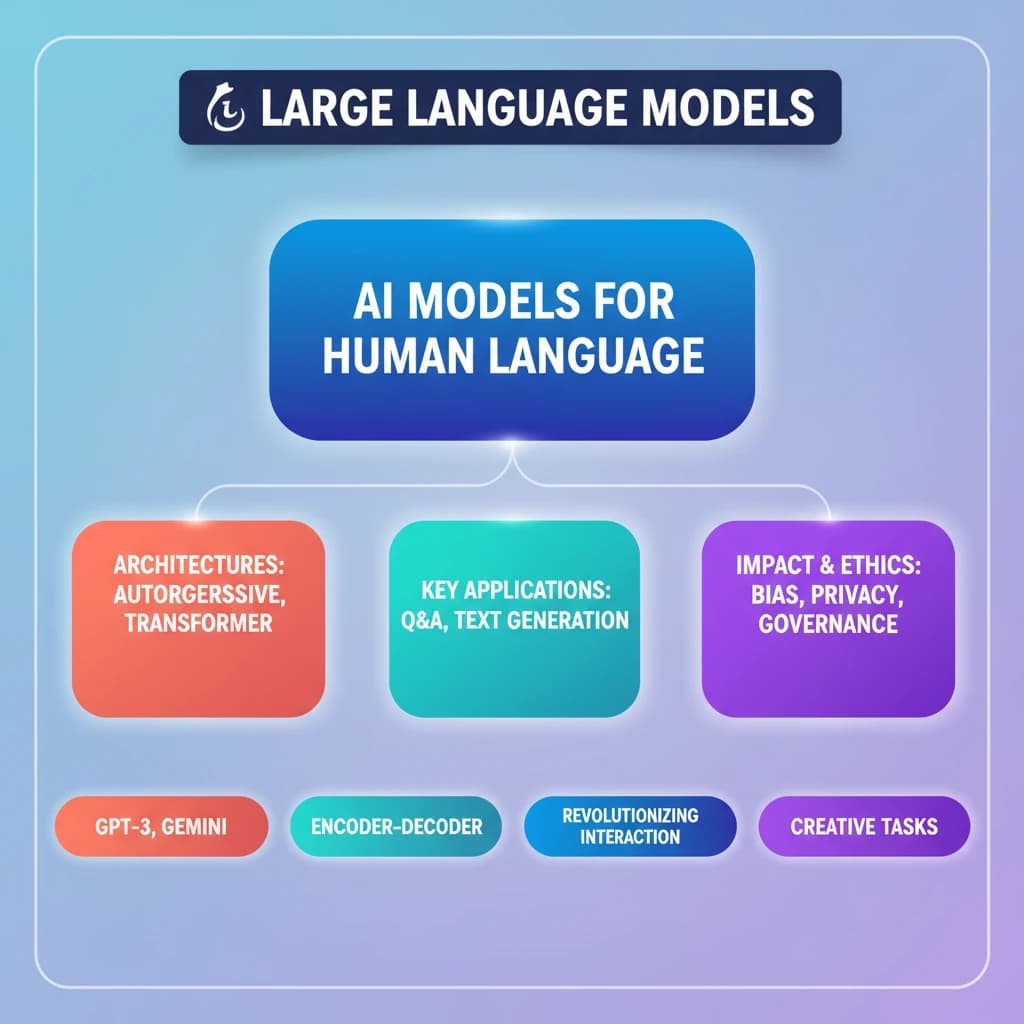

- LLMs are AI models trained on vast datasets to understand and generate human language.

- They solve common language problems like text classification, Q&A, and text generation.

- Architectural types include Autoregressive (e.g., GPT-3), Transformer-based (e.g., Gemini), and Encoder-decoder models.

- LLMs are revolutionizing human-computer interaction and creative tasks.

- Their development raises important ethical and regulatory considerations regarding bias, privacy, and governance.

Large Language Models

📖 Introduction

💡 Key Takeaways

- •LLMs are AI models trained on vast datasets to understand and generate human language.

- •They solve common language problems like text classification, Q&A, and text generation.

- •Architectural types include Autoregressive (e.g., GPT-3), Transformer-based (e.g., Gemini), and Encoder-decoder models.

- •LLMs are revolutionizing human-computer interaction and creative tasks.

- •Their development raises important ethical and regulatory considerations regarding bias, privacy, and governance.

🧠 Memory Techniques